When modern AI comes up, terms like "neural network" or "deep learning" are often mentioned. That sounds complicated – and the math behind it is. But the basic principle can be explained simply.

The Inspiration: The Brain

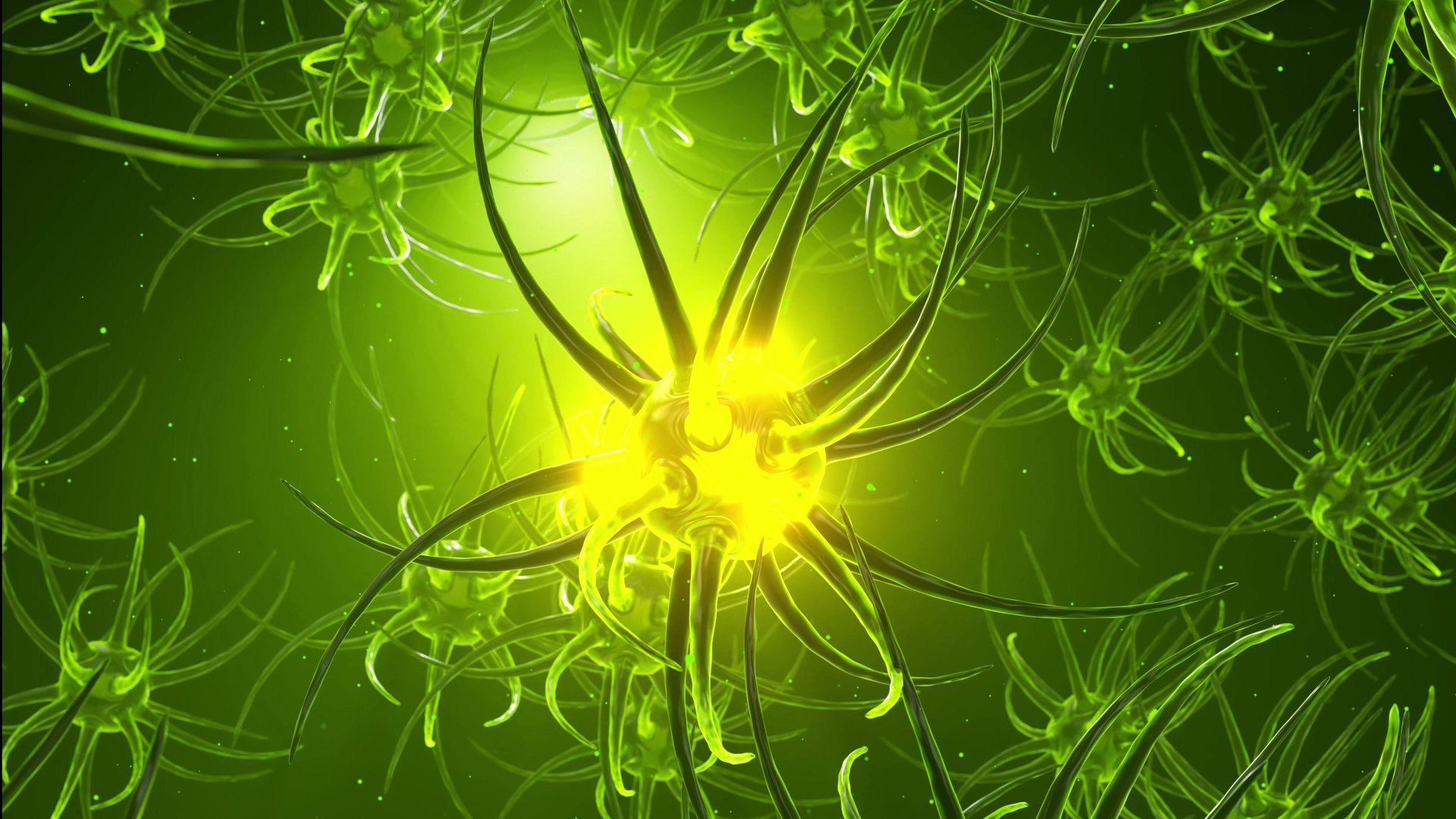

Neural networks are inspired by the human brain. Our brain consists of billions of nerve cells (neurons) that are connected to each other. Each neuron receives signals, processes them, and passes signals on.

Artificial neural networks work similarly – just much more simply. They consist of digital "neurons" that receive numbers, process them, and pass them on.

Layers and Connections

A neural network is organized in layers:

- Input layer: This is where the data comes in – for example, the pixels of an image

- Hidden layers: This is where the actual processing happens

- Output layer: This is where the result comes out – for example, "That is a dog"

Each neuron in a layer is connected to many neurons in the next layer. These connections have different "weights" – some are strong, some are weak.

Learning Through Adjustment

The crucial trick: these weights are adjusted during training.

Here's how it works:

- The network sees an example (e.g., an image)

- It gives an answer (e.g., "Cat")

- It learns whether the answer was correct (e.g., "No, that was a dog")

- The weights are adjusted to perform better next time

After thousands of such iterations, the network has learned to distinguish dogs from cats – without anyone explaining what a dog looks like.

Why "Deep Learning"?

"Deep" refers to the depth of the network – that is, the number of hidden layers. Early networks had few layers. Modern networks have hundreds.

More layers enable more complex patterns:

- Early layers recognize simple patterns (edges, lines)

- Middle layers recognize combinations (shapes, textures)

- Later layers recognize complex concepts (faces, objects)

A Practical Example

Imagine a network designed to recognize handwriting:

- Input: An image of a handwritten digit (e.g., a "7")

- Early layers: Recognize strokes and curves

- Middle layers: Combine these into typical shapes

- Output: "This digit is a 7" (with 97% probability)

Limitations of Neural Networks

Despite impressive capabilities, neural networks have weaknesses:

- They need many examples to learn

- They don't truly understand – they only recognize patterns

- They can learn wrong patterns if the training data is biased

- What they've learned is often hard to trace

Conclusion

Neural networks are not magical intelligence. They are mathematical structures that learn from examples. Inspired by the brain, but much simpler. Their success is based on the fact that with enough data and computing power, they can recognize astonishingly complex patterns – without anyone telling them what to look for.